Signs you should see a doctor about a wound

There are a few situations in which you should see your doctor as soon as you realize something’s not right. It may sound dramatic, but seeing a doctor for proper care can make the difference between healing and losing a limb. Call your doctor if the wound:

• Was caused by an animal bite

• Is painful

• Looks infected

• Is more than half an inch deep

• Was caused by something very dirty or rusty

• Has dirt or other debris stuck in it

• Is very close to the eye

You should also see a doctor if your wound doesn’t seem to be healing on its own. This may be a sign that there is an infection. If you have a health condition that causes poor blood circulation, this can also make it difficult for a wound to heal properly – these conditions include vascular disease, diabetes, obesity, and high blood pressure.

What will a doctor do about a wound?

To start with, in some cases, you may be prescribed antibiotics or penicillin and even pain medication. The treatment of your wound will depend on what caused it and how deep and serious it is. To care for your wound, your doctor may use negative pressure wound therapy, skin grafting, hyperbaric oxygen therapy, revascularization, or excisional debridement. Your doctor will also want to determine why the wound wasn’t healing properly on its own, to address that issue as well.

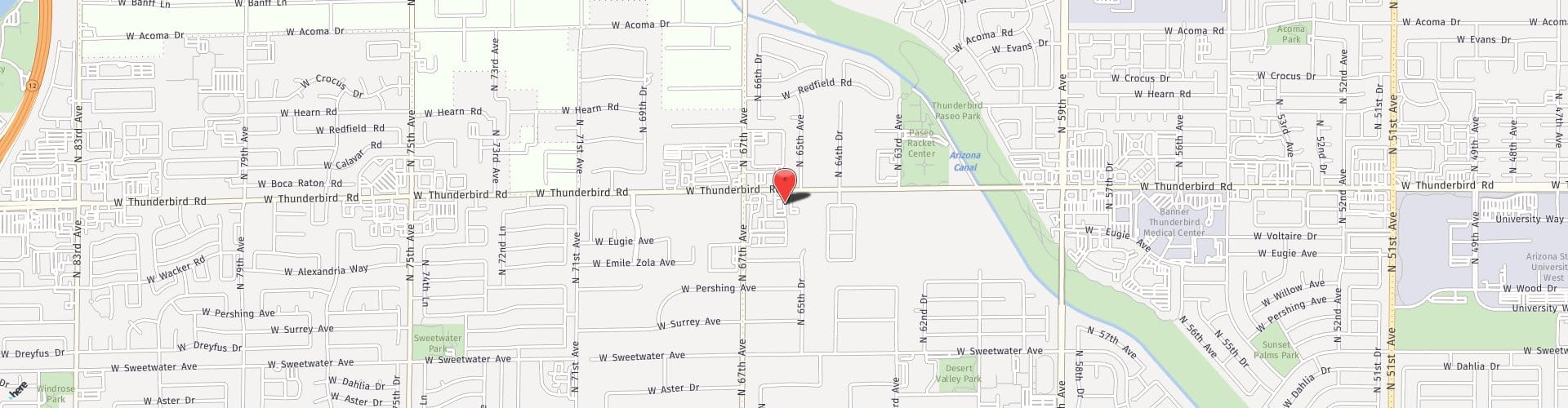

Although leaving a wound alone to heal is an option, there are some wounds that are too serious for this. If you are concerned about a wound that just doesn’t seem right, contact the Glendale, Arizona, office of Dr. Sammy A. Zakhary. Call (623) 258-3255 for an appointment today!